In Late 2022, when I began testing ChatGPT for my software development work, I was amazed that it could generate pure code that worked. However, after using it for many months, things changed. One day, I asked the AI to help me with a database rollback. It confidently provided me with a wrong solution. The system informed me that my data was “destroyed forever.” However, when I manually verified it, I was able to roll it back successfully.

After this experience, I realized that there is one question many users are only beginning to ask: Can AI chatbots make mistakes? This article explores why AI systems can be wrong, the types of errors they make, and how to manage them effectively.

So, can AI chatbots make mistakes?

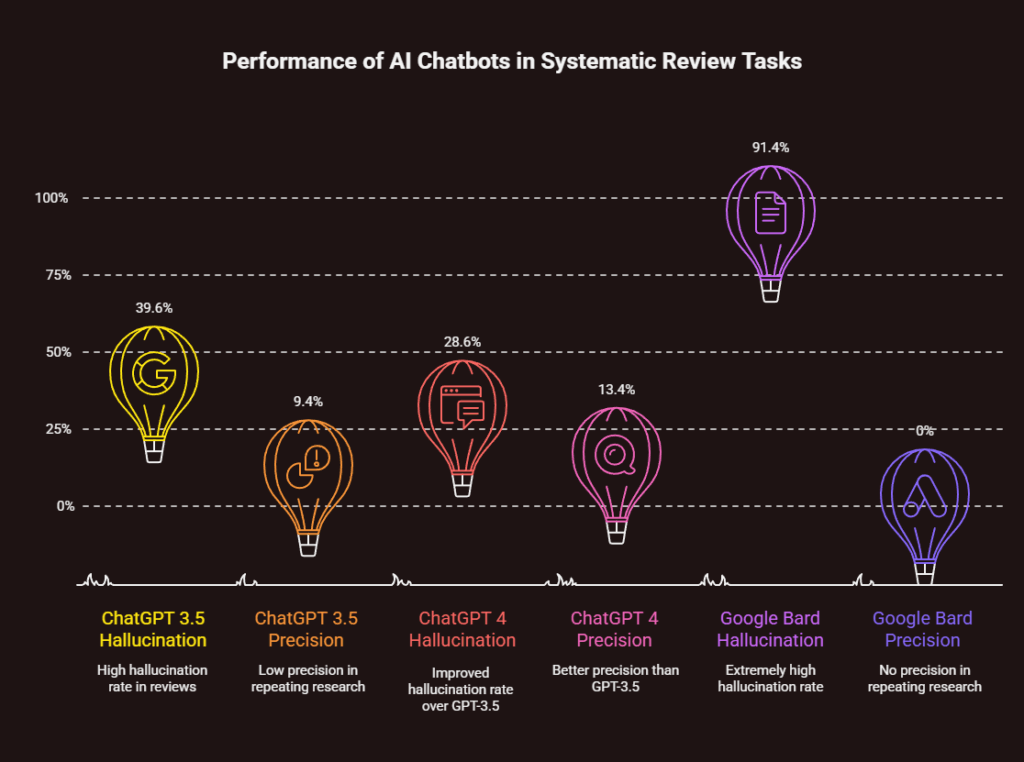

Yes, AI chatbots can and do make mistakes regularly. For those wondering, can AI chatbots make mistakes? A 2024 study published in the Journal of Medical Internet Research found that popular chatbots such as ChatGPT generate false information at rates ranging from 28.6% to 91.4%, depending on task difficulty. These may include errors that go from wrong facts to made-up references, despite the AI being confident in its answers.

The Scale of AI Chatbot Errors: What Recent Research Reveals

The question: Can AI chatbots make mistakes? has been highly researched, and the findings may shock most casual users. When I looked at recent findings by medical institutions that have been testing AI for reliability, I found error rates that would shock most casual users.

A comprehensive study published in the Journal of Medical Internet Research examined three major AI chatbots (ChatGPT 3.5, ChatGPT 4, and Google Bard) across 471 references in systematic review tasks. The results were remarkable, as hallucination rates for GPT-3.5 stood at 39.6%, GPT-4 at 28.6%, and Bard at 91.4%. Worryingly, though, these models achieved a precision of just 9.4%, 13.4%, and 0% respectively when attempting to repeat research by humans.

When I tested other AI platforms for content creation and research assistance, I noticed they had similar quirks. The best models still present confidently, but often when we check, they have incorrect information, and sometimes it’s completely wrong.

Exploring the Question: Can AI Chatbots Make Mistakes and Why

The Fundamental Misunderstanding About AI “Intelligence”

People often seem to chat with chatbots as if they were asking a realistic human. Giving animals characteristics creates dangerous misperceptions regarding how these systems work. When I ask ChatGPT to explain a complex engineering term, I am not speaking to a machine that understands it; I am requesting the machine to generate text with a complex-sounding answer as per its predictive text engine.

As Ars Technica‘s analysis of chatbot limitations explains, there’s “nobody home” when you interact with these systems. The conversational interface may seem intelligent, but it is simply a statistical model that creates text based on probabilities, rather than an intelligent machine capable of rational thinking.

The Impossibility of True Self-Assessment

One big danger of AI chatbots messing up is that they don’t know their strengths and weaknesses. When I ask ChatGPT why it gave me wrong information, its replies sound reasonable but are themselves fabricated explanations. The AI provides plausible-sounding explanations because that’s what the pattern completion requires—not because it has any insight into its failures.

Research on Recursive Introspection has shown that attempts at AI self-correction degrade model performance rather than improve it. Essentially, asking the AI to verify its output or explain its rationale typically results in more errors and not fewer.

Real-World Consequences of AI Chatbot Mistakes

Professional and Academic Disasters

AI chatbot errors can lead to more than just inconvenience. I have documented cases where lawyers were punished for mentioning fake legal cases generated by ChatGPT. Similarly, doctors made treatment calls based on AI info, which was wrong.

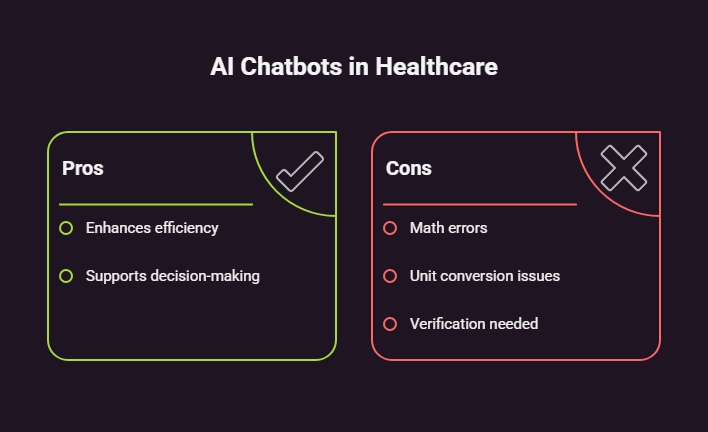

In one particularly concerning study, researchers asked AI chatbots healthcare-related questions and found that some responses “could result in clinical decisions leading to harm”. The research found drug calculation errors, unit mistakes, and treatment errors that may be detrimental to patient safety.

The Reference Fabrication Problem

An extremely clever error on the part of AI is the generation of references that sound plausible but are completely made up. While researching for this post, I found multiple cases where chatbots offered detailed bibliographic information about non-existent papers.

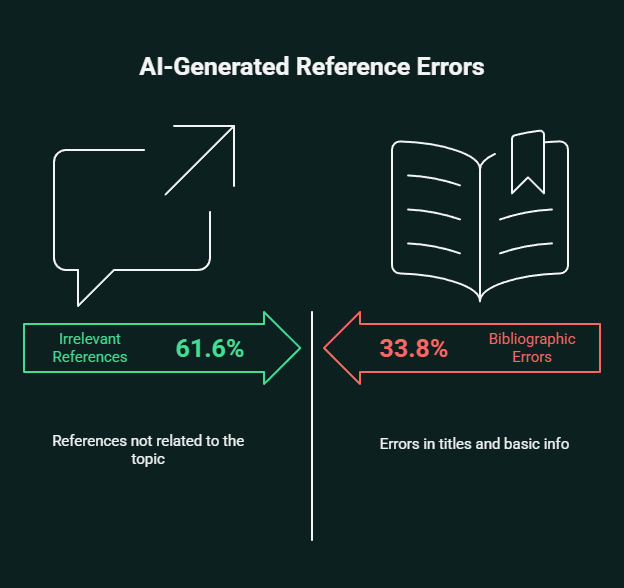

A medical study using the Reference Hallucination Score (RHS) found that 61.6% of AI-generated references were irrelevant to the requested topic, while 33.8% contained errors in basic bibliographic information like titles, as stated in JMIR Medical Informatics. Without realizing it, researchers, students, and professionals might add false information, which can be risky to them.

Types of AI Chatbot Mistakes and How to Recognize Them

Computational and Mathematical Errors

During my testing, I found that AI chatbots face challenges when it comes to math and unit conversion. In healthcare scenarios, for example, ChatGPT has been documented making 12% errors in creatinine clearance calculations and incorrectly assuming units of measurement without verification, as observed in BMC Medical Informatics and Decision Making.

Whenever I use the AI for any calculations, I cross-verify it all the time. The AI’s level of confidence in presenting numerical results is not correlated with how accurate its answers are.

Hallucinated Sources and References

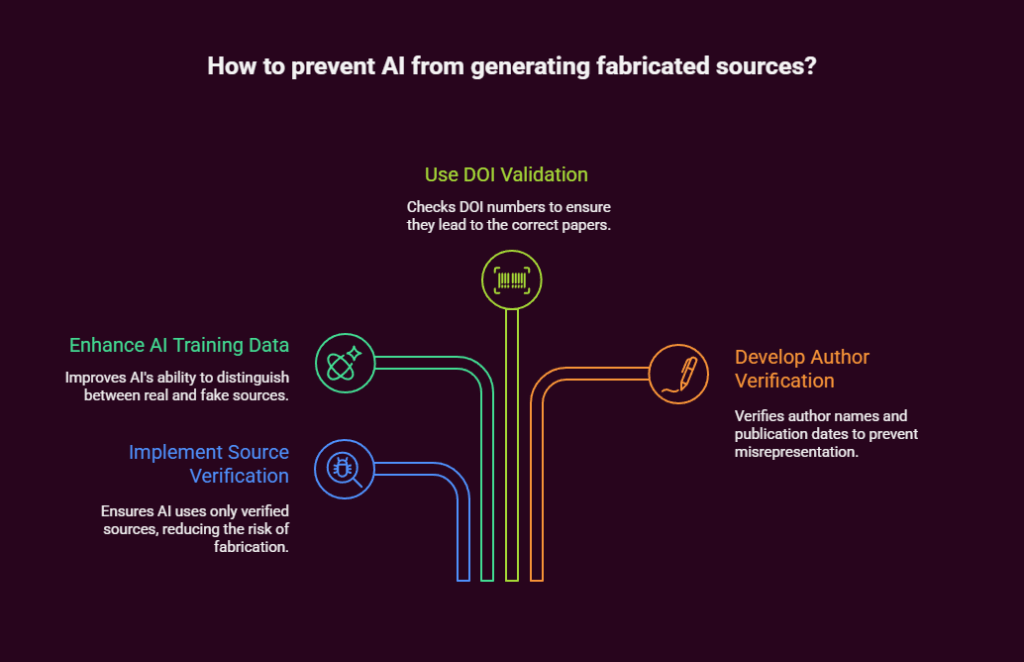

It is highly unsafe and can lead to serious problems when AI generates unnamed sources that sound credible. These fabricated references often include.

- Names and Articles of Actual Research Journals

- Plausible Author’s Names and Publication Date.

- In-depth summaries that follow the required subject.

- DOI numbers that lead nowhere or to totally different papers.

Contextual Misunderstanding

AI chatbots often overlook basic contextual cues that experts would easily identify.

It happens in medical requests that AI provides generic advice, ignoring conditions that are specified within the prompt. For example, when prompted about how to treat low blood sugar in a patient with celiac disease, ChatGPT gave standard recommendations for treating hypoglycemia, never mind gluten-containing products that would harm this patient.

The Business and Strategic Implications

Impact on Professional Workflows

As a big user of AIs in my work life, I see the steps within organisations where the chatbot messes things up. Using faulty AI-generated code, analysis, or recommendations when making business decisions can be very costly.

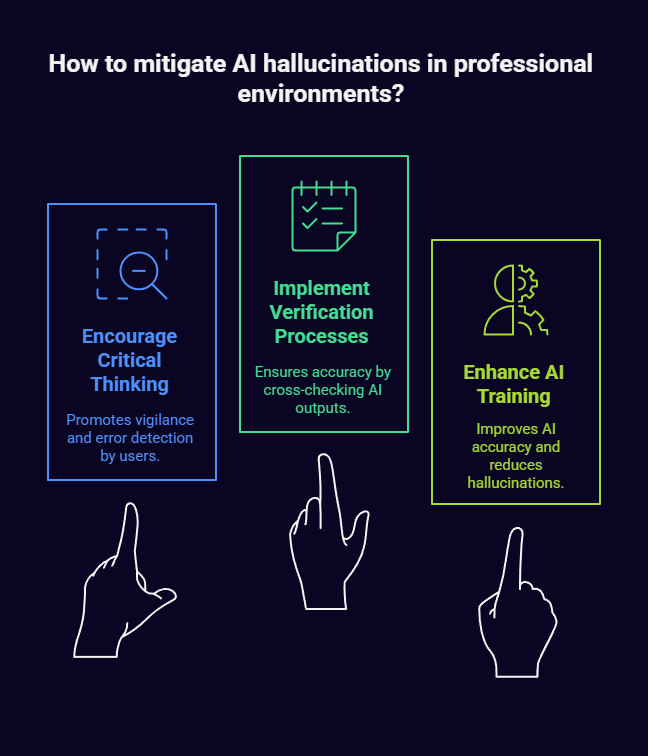

According to a Nielsen Norman Group analysis, AI hallucinations particularly threaten professional environments where accuracy is crucial. Sometimes, AI output is so polished that it discourages critical thinking necessary to catch mistakes.

The Verification Burden

Users, in the light of AI’s fallibility, face a heavy verification burden. Every AI-based output must be fact-checked, which slows down processes rather than speeding them up. In my experience, AI generation saves some time, but you will spend that time and some more on verification.

Strategies for Managing AI Chatbot Mistakes

Implementing Systematic Verification Processes

From using AI tools extensively, I have created several strategies for mitigating damages caused by mistakes made by AI.

Never rely on AI outputs without validating

- Computing maths and analyzing data.

- Professional or academic references.

- Information regarding health, law, safety, etc.

- Specs or grammar (full rule).

Use AI as a starting point, not an endpoint. I see AI content as a basic version that needs heavy human checks and fixing!

Cross-reference with authoritative sources. I double-check claims against sound professional references, peer-reviewed literature, or formal documents for all vital info.

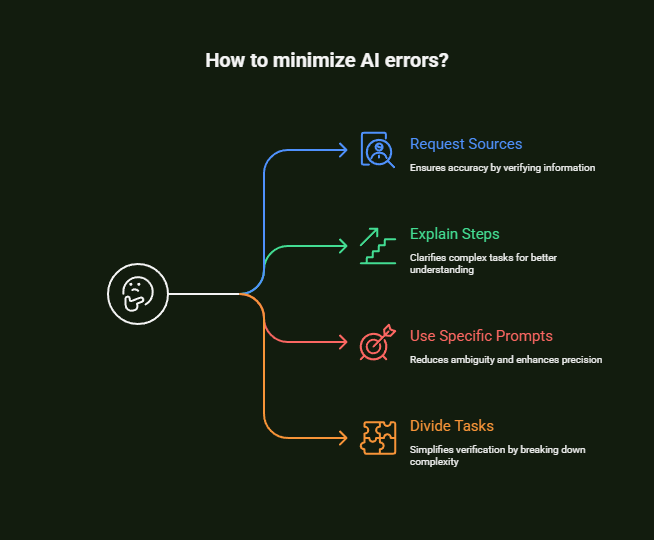

Prompting Strategies That Reduce Errors

Although no prompting method can completely prevent AI errors, some methods can limit them.

- Ask clients for sources and references upfront

- Please explain the steps to solve complicated issues.

- You should use specific, detailed prompts and not vague questions.

- For best results, divide difficult tasks into small components that can be verified.

It is important to note that these methods can be wrong too. Considering the basic flaws of AI tech, errors are bound to happen with any prompting method.

The Future of AI Reliability

Ongoing Research and Development

The AI industry is working to solve hallucination and error issues. Companies like Anthropic are investing heavily in interpretability research to better understand how their models generate responses, as highlighted in Anthropic’s official news. Nonetheless, these problems may be solved not through marginal improvements but rather through radical advancements in AI architecture.

Setting Realistic Expectations

Even as AI technology advances, users should ask, “Can AI chatbots make mistakes?” and set realistic expectations about their reliability. Future models may reduce error rates, but since current AI systems are probabilistic, some level of error is expected to exist for the foreseeable future.

Ethical Considerations and Responsible AI Use

The Responsibility of AI Developers

AI companies need to disclose the limits of their systems and that they could make mistakes. Emphasis on these issues is being downplayed or buried in fine print in many current implementations. When I use ChatGPT, for example, the warning only reads ‘ChatGPT can make mistakes’ – a pretty small note that does not truly represent the magnitude of the reliability issues.

User Education and Digital Literacy

As AI tools become ever-present, digital literacy education should take into account their limitations and verification strategies. Users must build the capacity to critically assess AI outputs and identify likely mistakes.

Moving Forward: A Balanced Approach to AI Use

Yes, AI chatbots can make mistakes. They make mistakes quite a lot. However, this doesn’t mean AI tools are worthless. When used correctly—after verification, with realistic expectations, and understanding the limits—AI chatbots may still be useful for brainstorming, drafting, and research.

The essential part is to work on a smart understanding of when and how to use them. When it comes to AI chatbots, think of them as assistants or starting points rather than authorities or final answers, as things to supplement expert opinion rather than replace it.

As we increasingly incorporate AI into our work and home lives, we must maintain a healthy skepticism about its outputs, and not just because it’s the smart thing to do. The most successful users of AI technology that I know are those who are excited by what the future may hold, but also rigorous in their verification and aware of its current limitations.

Our future with AI relies not on trust in its reasoning, but on advanced legislation that will aid us in using its strengths while downgrading its weaknesses. The first step on this important journey is acknowledging that AI chatbots make mistakes and can make them.